I could point out that the Linux kernel was never designed to support out-of-tree modules – let alone proprietary modules. The reason for it has nothing to do with the kernel driver loading interface (?!?!?) and anything to do with the distribution’s reluctance to be forced into keeping a stable API across major releases on one hand, and preventing comparability issues on the other – as even a minute change in one of the kernel’s structures or API can crash your system (Hence, you are forced to rebuild your module against the latest kernel-headers). (As far as I know, a driver that was build against RHEL 5.4, can be used against all RHEL 5.4 series kernels ). Most distribution (if not all), enable version to support in-order to -force- out-of-tree kernel modules to be recompiled against the latest kernel build – or at least against the latest kernel major build.

Now, the kernel team could trivially provide a stable driver-loading interface, so that that didn’t have to happen they have quite deliberately elected not to do so.Īs far as I remember, you can configure the kernel to ignore build versions (CONFIG_MODVERSIONS?).

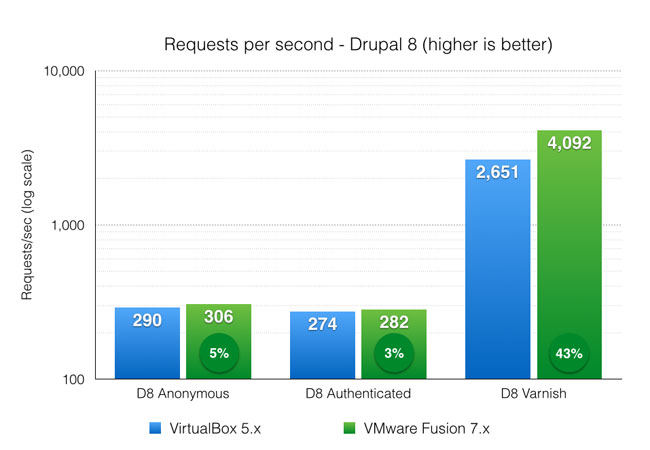

#Vmware vs virtualbox vs hyper v drivers#

Because those drivers are actually kernel modules, that have to have bits of them built against the source-tree used to build the kernel that’s meant to load them. I’m talking about a way to not have to re-build all your third-party drivers every time you update the kernel, like I get to do now (which is in no way a hassle, and never fails or leaves my system in an unstable state! Really!).Įvery time Red Hat pushes out a kernel update, I have to re-run the VirtualBox installer to re-build the kernel driver, and I get to un-install and re-install the nVidia driver. There is a difference - at least, there is a difference between a kernel module the way the Linux kernel does it, and a driver the way Windows does it.

I’m talking about loading an arbitrary, external binary driver, not a kernel module.